I’ve been doing some performance timings of file format support, comparing MS Office and OpenOffice. Most of the results are as expected, but some are surprising, and one in particular is quite disappointing.

But first, a little details of my setup. All timings, done by stopwatch, were from Office 2003 and OpenOffice 2.4.0 running on Windows XP, with all current service packs and patches. The machine is a Lenova T60p, dual-core Intel 2.16 Ghz and 2 GB of RAM. I took all the standard precautions — disk was defragmented, and test files were confirmed as defragmented using contig. No other applications were running and background tasks were all shut down.

For test files, I went back to an old favorite, George Ou’s (at the time with ZDNet) monster 50MB XLS file from his series of tests back in 2005. This file, although very large, is very simple. There are no formulas, indeed no formatting or styles. It is just text and numbers, treating a spreadsheet like a giant data table. So tests of this file will emphasize the raw throughput of the applications. Real world spreadsheets will typically be worse than this due to additional overhead from process styles, formulas, etc.

A test of a single file is not really that interesting. We want to see trends, see patterns. So I made a set of variations on George’s original file, converting it into ODF, XLS and OOXML formats, as well as making scaled down versions of it. In total I made 12 different sized subsets of the original file, ranging down to a 437KB version, and created each file in all three formats. I then tested how long it took to load each file in each of the applications. In the case of MS Office, I installed the current versions of the translators for those formats, the Compatibility Pack for OOXML, and the ODF Add-in for the ODF support.

I find it convenient to report numbers per 100,000 spreadsheet cells. You could equally well use the original XLS spreadsheet size, or the number of rows of data, or any other correlated variable as the ordinate, but values per 100K cells is simple for anyone to understand.

I’ll spare you all the pretty picture. If you want to make some, here is the raw data (CSV format). But I will give some summary observations.

For document sizes, the results are as follows:

- Binary XLS format = 1,503 KB per 100K cells

- OOXML format = 491 KB per 100K cells

- ODF format = 117 KB per 100K cells

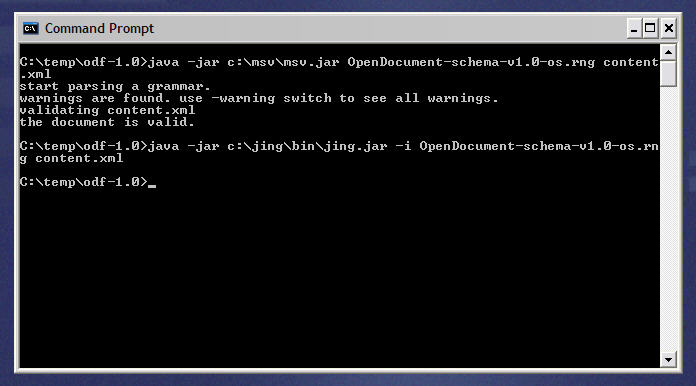

So the XML formats are far smaller than the legacy binary format. This is due to the added Zip compression that both XML formats use. Also, note that the ODF files are significantly smaller than the OOXML files, less than 1/4 the size on average. Upon further examination, the XML document representing the ODF content is larger than the corresponding XML in OOXML, as expected, due to its use of longer, more descriptive markup tags. However the ODF XML compresses far better than the OOXML version, enough to overcome its greater verbosity and result in files smaller than OOXML. The compression ratio (original/zipped) for ODF’s content.xml is 87, whereas the compression ratio for OOXML’s sheet1.xml is only 12. We could just mumble something about entropy and walk away, but I think this area could bear further investigation.

Any ideas?

For load time, the times for processing the binary XLS files were:

- Microsoft Office 2003 = 0.03 seconds per 100K cells

- OpenOffice 2.4.0 = 0.4 seconds per 100K cells

Not too surprising. These binary formats are optimized for the guts of MS Office. We would expect them to load faster in their native application.

So what about the new XML formats? There has been recent talk about the “Angle Bracket Tax” for XML formats. How bad is it?

- Microsoft Office 2003 with OOXML = 1.5 seconds per 100K cells

- OpenOffice 2.4.0 with ODF = 2.7 seconds per 100K cells

For typical sized documents, you probably will not notice the difference. However with the largest documents, like the 16-page, 3-million cells monster sheet, the OOXML document took 40 seconds to load in Office, the ODF sheet took 90 seconds to load in OpenOffice, whereas the XLS binary took less than 2 seconds to load in MS Office.

OK. So what are we missing. Ah, yes, ODF format in MS Office, using their ODF Add-in.

- Microsoft Office 2003 with ODF, using the ODF Add-in = 74.6 seconds per 100K cells

Yup. You read that right. To put this in perspective, let’s look at a single test file, a 600K cells file, as we load it in the various formats and editors:

- Microsoft Office 2003 in XLS format = 0.75 seconds

- OpenOffice 2.4.0 in XLS format = 3.03 seconds

- Microsoft Office 2003 in OOXML format = 8.28 seconds

- OpenOffice 2.4.0 in ODF format = 14.09 seconds

- Microsoft Office 2003 in ODF format = 515.60 seconds

Can someone explain to me why Microsoft Office needs almost 10 minutes to load an ODF file that OpenOffice can load in 14 seconds?

(I was not able to test files larger than this using the ODF Add-in since they all crashed .)

(Update: Since it is the question everyone wants to know, the beta version of OpenOffice 3.0 opens the OOXML version of that file in 49.4 seconds and Sun’s ODF Plugin for Microsoft Office loads this file in 30.03 seconds. )

This is one reason why I think file format translation is a poor engineering approach to interoperability. When OpenOffice wants to read an legacy XLS file, it does not approach the problem by translating the XLS into an ODF document and then loading the ODF file. Instead they simply load the XLS file, via a file filter, into the internal memory model of OpenOffice.

What is a file filter? It is like 1/2 of a translator. Instead of translating from one disk format to another disk format, it simply loads the disk format and maps it into an application-specific memory model that the application logic can operate directly on. This is far more efficient than translation. This is the untold truth that the layperson does not know. But this is how everyone does it. That is how we support formats in SmartSuite. That is how OpenOffice does it. And that is how MS Office does it for the file formats they care about. In fact, that is the way that Novell is now doing it now, since they discovered that the Microsoft approach is doomed to performance hell.

So it is with some amusement that I watch Microsoft and others propose translation as a solution to interoperability, creating reports about translation, even a proposal for a new work item in JTC1/SC34 concerning file format translation, when the single concrete attempt at translation is such an abysmal failure. It may look great on paper, but it is an engineering disaster. What customers need is direct, internal support for ODF in MS Office, via native code, in a file filter, not a translator that takes 10 minutes to load a file.

The astute engineer will agree with the above, but will also feel some discomfort at the numbers. There is more here than can be explained simply by the use of translators versus import filters. That choice might explain a 2x difference in performance. A particularly poor implementation might explain a 5x difference. But none of this explains why MS Office is almost 40x slower in processing ODF files. Being that much slower is hard to do accidentally. Other forces must be at play.

Any ideas?