I’d like to synthesize some thoughts I’ve been having in recent weeks. But before I do that, let’s have a joke:

A Harvard Divinity School student reviews a proposed dissertation topic with his advisor. The professor looks over the abstract for a minute and gives his initial appraisal.

“You are proposing an interesting theory here, but it isn’t new. It was first expressed by a 4th Century Syrian monk. But he made the argument better than you. And he was wrong.”

So it is with some trepidation that I make an observation which may not be novel, well-stated, or even correct, but here it goes:

There is (or should be) an important relationship between patents and standards, or more precisely, between patent quality and standards quality.

As we all know, a patent is an exclusive property right, granted by the state for a limited period of time to an inventor in return for publicly disclosing the workings of his invention. In fact the meaning of “to patent” was originally, “to make open”. We have a lingering sense of this in phrases like, “that is patently absurd”. So, some public good ensues for the patent disclosure, and the inventor gets a short-term monopoly in the use of that invention in return. It is a win-win situation.

To ensure that the public gets their half of the bargain, a patent may be held invalid if there is not sufficient disclosure, if a “person having ordinary skill in the art” cannot “make and use” the invention without “undue experimentation”. The legal term for this is “enablement”. If a patent application has insufficient enablement then it can be rejected.

For example, take the patent application US 20060168937, “Magnetic Monopole Spacecraft” where it is claimed that a spacecraft of a specified shape can be powered by AC current and thereby induce a field of wormholes and magnetic monopoles. Once you’ve done that, the spacecraft practically flies itself.

The author describes that in one experiment he personally was teleported through hyperspace over 100 meters, and in another he blew smoke into a wormhole where it disappeared and came out another wormhole. However, although the inventor takes us carefully through the details of how the hull of his spacecraft was machined, the most critical aspect, the propulsion mechanism, is alluded to, but not really detailed.

(Granted, I may not be counted as a person skilled in this particular art. I studied astrophysics at Harvard, not M.I.T. Our program did not cover the practical applications of hyperspace wormhole travel.)

But one thing is certain — the existence of the magnetic monopole is still hypothetical. No one has shown conclusively that they exist. The first person who detects one will no doubt win the Nobel Prize in Physics. This is clearly a case of requiring “undue experimentation” to make and use this invention, and I would not be surprised if it is rejected for lack of enablement.

I’d suggest that a similar criterion be used for evaluating a standard. When a company proposes that one of its proprietary technologies be standardized, they are making a similar deal with the public. In return for specifying the details of their technology and enabling interoperability, they are getting a significant head start in implementing that standard, and will initially have the best and fullest implementation of that standard. The benefits to the company are clear. But to ensure that the public gets their half of the bargain, we should ask the question, is there sufficient disclosure to enable a “person having ordinary skill in the art” to “make and use” an interoperable implementation of the standard without “undue experimentation”. If a standard does not enable others to do this, then it should be rejected. The public and the standards organizations that represent them should demand this.

Simple enough? Let’s look at the new Ecma Office Open XML (OOXML) standard from this perspective. Microsoft claims that this standard is 100% compatible with billions of legacy Office documents. But is anyone actually able to use this specification to achieve this claimed benefit without undue experimentation? I don’t think so. For example, macros and scripts are not specified at all in OOXML. The standard is silent on these features. So how can anyone practice the claimed 100% backwards compatibility?

Similarly, there are a number of backwards-compatibility “features” which are specified in the following style:

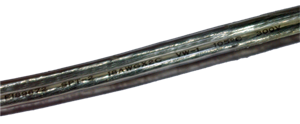

2.15.3.26 footnoteLayoutLikeWW8 (Emulate Word 6.x/95/97 Footnote Placement)

This element specifies that applications shall emulate the behavior of a previously existing word processing application (Microsoft Word 6.x/95/97) when determining the placement of the contents of footnotes relative to the page on which the footnote reference occurs. This emulation typically involves some and/or all of the footnote being inappropriately placed on the page following the footnote reference.

[Guidance: To faithfully replicate this behavior, applications must imitate the behavior of that application, which involves many possible behaviors and cannot be faithfully placed into narrative for this Office Open XML Standard. If applications wish to match this behavior, they must utilize and duplicate the output of those applications. It is recommended that applications not intentionally replicate this behavior as it was deprecated due to issues with its output, and is maintained only for compatibility with existing documents from that application. end guidance]

This sounds oddly like Fermat’s, “I have a truly marvelous proof of this proposition which this margin is too narrow to contain”, but we don’t give Fermat credit for proving his Last Theorem and we shouldn’t give Microsoft credit for enabling backwards compatibility. How is this description any different than the patent application claim magnetic monopoles to drive hyperspace travel? The OOXML standard simply does not enable the functionality that Microsoft claims it contains.

Similarly, Digital Rights Management (DRM) has been an increasingly prominent part of Microsoft’s strategy since Office 2003. As one analyst put it:

The new rights management tools splinter to some extent the long-standing interoperability of Office formats. Until now, PC users have been able to count on opening and manipulating any document saved in Microsoft Word’s “.doc” format or Excel’s “.xls” in any compatible program, including older versions of Office and competing packages such as Sun Microsystems’ StarOffice and the open-source OpenOffice. But rights-protected documents created in Office 2003 can be manipulated only in Office 2003.

This has the potential to make any other file format disclosure by Microsoft irrelevant. If they hold the keys to the DRM, then they own your data. The OOXML specification is silent on DRM. So how can Microsoft say that OOXML is 100% compatible with Office 2007, let alone legacy DRM’ed documents from Office 2003? The OOXML standard simply does not enable anyone else to practice interoperable DRM.

It should also be noted that the legacy Office binary formats are not publicly available. They have been licensed by Microsoft under various restrictive schemes over the years, for example, only for use on Windows, only for use if you are not competing against Office, etc., but they have never been simply made available for download. And they’ve certainly never been released under the Open Specification Promise. So lacking a non-discriminatory, royalty-free license for the binary file format specification, how can anyone actually practice the claimed 100% compatibility? Isn’t it rather unorthodox to have a “standard” whose main benefit is claimed to be 100% compatibility with another specification that is treated as a trade secret? Doesn’t compatibility require that you disclose both formats?

Now what is probably true is that Microsoft Office 2007, the application, is compatible with legacy documents. But that is something else entirely. That fact would be true even if OOXML is not approved by ISO standard, or even if it were not an Ecma standard. In fact, Microsoft could have stuck with proprietary binary formats in Office 2007 and this would still be true. But by the criterion of whether a person having ordinary skill in the art can practice the claimed compatibility with legacy documents, this claim falls flat on its face. By accepting this standard, without sufficient enablement in the specification, the public risks giving away its standards imprimatur to Microsoft without getting a fair disclosure or the expectation of interoperability in return.