March 6th Update: Microsoft appears to have updated the www.browserchoice.eu website and corrected the error I describe in this post. More details on the fix can be found in The New & Improved Microsoft Shuffle. However, I think you will still find the following analysis interesting.

-Rob

Introduction

The story first hit in last week on the Slovakian tech site DSL.sk. Since I am not linguistically equipped to follow the Slovakian tech scene, I didn’t hear about the story until it was brought up in English on TechCrunch. The gist of these reports is this: DSL.sk did a test of the “ballot” screen at www.browserchoice.eu, used in Microsoft Windows 7 to prompt the user to install a browser. It was a Microsoft concession to the EU, to provide a randomized ballot screen for users to select a browser. However, the DSL.sk test suggested that the ordering of the browsers was far from random.

But this wasn’t a simple case of Internet Explorer showing up more in the first position. The non-randomness was pronounced, but more complicated. For example, Chrome was more likely to show up in one of the first 3 positions. And Internet Explorer showed up 50% of the time in the last position. This isn’t just a minor case of it being slightly random. Try this test yourself: Load www.browserchoice.eu, in Internet Explorer, and press refresh 20 times. Count how many times the Internet Explorer choice is on the far right. Can this be right?

The DLS.sk findings have lead to various theories, made on the likely mistaken theory that this is an intentional non-randomness. Does Microsoft have secret research showing that the 5th position is actually chosen more often? Is the Internet Explorer random number generator not random? There were also comments asserting that the tests proved nothing, and the results were just chance, and others saying that the results are expected to be non-random because computers can only make pseudo-random numbers, not genuinely random numbers.

Maybe there was cogent technical analysis of this issue posted someplace, but if there was, I could not find it. So I’m providing my own analysis here, a little statistics and a little algorithms 101. I’ll tell you what went wrong, and how Microsoft can fix it. In the end it is a rookie mistake in the code, but it is an interesting mistake that we can learn from, so I’ll examine it in some depth.

Are the results random?

The ordering of the browser choices is determined by JavaScript code on the BrowserChoice.eu web site. You can see the core function in the GenerateBrowserOrder function. I took that function and supporting functions, put it into my own HTML file, added some test driver code and ran it for 10,000 iterations on Internet Explorer. The results are as follows:

| Position | I.E. | Firefox | Opera | Chrome | Safari |

|---|---|---|---|---|---|

| 1 | 1304 | 2099 | 2132 | 2595 | 1870 |

| 2 | 1325 | 2161 | 2036 | 2565 | 1913 |

| 3 | 1105 | 2244 | 1374 | 3679 | 1598 |

| 4 | 1232 | 2248 | 1916 | 590 | 4014 |

| 5 | 5034 | 1248 | 2542 | 571 | 605 |

| Position | I.E. | Firefox | Opera | Chrome | Safari |

|---|---|---|---|---|---|

| 1 | 0.1304 | 0.2099 | 0.2132 | 0.2595 | 0.1870 |

| 2 | 0.1325 | 0.2161 | 0.2036 | 0.2565 | 0.1913 |

| 3 | 0.1105 | 0.2244 | 0.1374 | 0.3679 | 0.1598 |

| 4 | 0.1232 | 0.2248 | 0.1916 | 0.0590 | 0.4014 |

| 5 | 0.5034 | 0.1248 | 0.2542 | 0.0571 | 0.0605 |

This confirms the DSL.sk results. Chrome appears more often in one of the first 3 positions and I.E. is most likely to be in the 5th position.

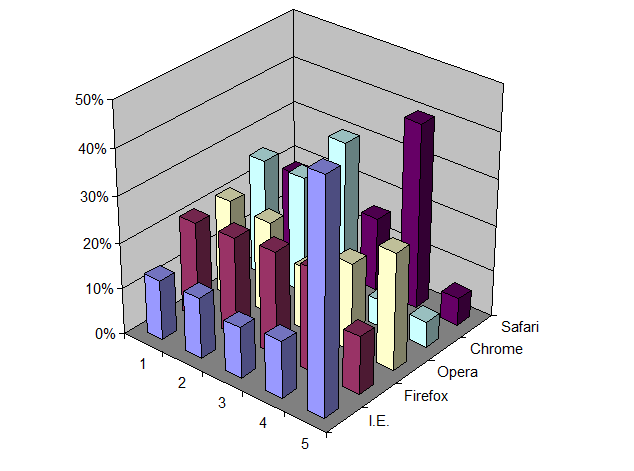

You can also see this graphically in a 3D bar chart:

But is this a statistically significant result? I think most of us have an intuitive feeling that results are more significant if many tests are run, and if the results also vary much from an even distribution of positions. On the other hand, we also know that a finite run of even a perfectly random algorithm will not give a perfectly uniform distribution. It would be quite unusual if every cell in the above table was exactly 2,000.

This is not a question one answers with debate. To go beyond intuition you need to perform a statistical test. In this case, a good test is Pearson’s Chi-square test, which tests how well observed results match a specified distribution. In this test we assume the null-hypothesis that the observed data is taken from a uniform distribution. The test then tells us the probability that the observed results can be explained by chance. In other words, what is the probability that the difference between observation and a uniform distribution was just the luck of the draw? If that probability is very small, say less than 1%, then we can say with high confidence, say 99% confidence, that the positions are not uniformly distributed. However, if the test returns a larger number, then we cannot disprove our null-hypothesis. That doesn’t mean the null-hypothesis is true. It just means we can’t disprove it. In the end we can never prove the null hypothesis. We can only try to disprove it.

Note also that having a uniform distribution is not the same as having uniformly distributed random positions. There are ways of getting a uniform distribution that are not random, for example, by treating the order as a circular buffer and rotating through the list on each invocation. Whether or not randomization is needed is ultimately dictated by the architectural assumptions of your application. If you determine the order on a central server and then serve out that order on each innovation, then you can use non-random solutions, like the rotating circular buffer. But if the ordering is determined independently on each client, for each invocation, then you need some source of randomness on each client to achieve a uniform distribution overall. But regardless of how you attempt to achieve a uniform distribution the way to test it is the same, using the Chi-square test.

Using the open source statistical package R, I ran the chisq.test() routine on the above data. The results are:

X-squared = 13340.23, df = 16, p-value < 2.2e-16

The p-value is much, much less than 1%. So, we can say with high confidence that the results are not random.

Repeating the same test on Firefox is also non-random, but in a different way:

| Position | I.E. | Firefox | Opera | Chrome | Safari |

|---|---|---|---|---|---|

| 1 | 2494 | 2489 | 1612 | 947 | 2458 |

| 2 | 2892 | 2820 | 1909 | 1111 | 1268 |

| 3 | 2398 | 2435 | 2643 | 1891 | 633 |

| 4 | 1628 | 1638 | 2632 | 3779 | 323 |

| 5 | 588 | 618 | 1204 | 2272 | 5318 |

| Position | I.E. | Firefox | Opera | Chrome | Safari |

|---|---|---|---|---|---|

| 1 | 0.2494 | 0.2489 | 0.1612 | 0.0947 | 0.2458 |

| 2 | 0.2892 | 0.2820 | 0.1909 | 0.1111 | 0.1268 |

| 3 | 0.2398 | 0.2435 | 0.2643 | 0.1891 | 0.0633 |

| 4 | 0.1628 | 0.1638 | 0.2632 | 0.3779 | 0.0323 |

| 5 | 0.0588 | 0.0618 | 0.1204 | 0.2272 | 0.5318 |

On Firefox, Internet Explorer is more frequently in one of the first 3 positions, while Safari is most often in last position. Strange. The same code, but vastly different results.

The results here are also highly significant:

X-squared = 14831.41, df = 16, p-value < 2.2e-16

So given the above, we know two things: 1) The problem is real. 2) The problem is not related to a flaw only in Internet Explorer.

In the next section we look at the algorithm and show what the real problem is, and how to fix it.

Random shuffles

The browser choice screen requires what we call a “random shuffle”. You start with an array of values and return those same values, but in a randomized order. This computational problem has been known since the earliest days of computing. There are 4 well-known approaches: 2 good solutions, 1 acceptable (“good enough”) solution that is slower than necessary, and 1 bad approach that doesn’t really work. Microsoft appears to have picked the bad approach. But I do not believe there is some nefarious intent to this bug. It is more in the nature of a “naive” algorithm”, like the bubble sort, that inexperienced programmers inevitably will fall upon when solving a given problem. I bet if we gave this same problem to 100 freshmen computer science majors, at least one of them would make the same mistake. But with education and experience, one learns about these things. And one of the things one learns early on is to reach for Knuth.

The Art of Computer Programming, Vol. 2, section 3.4.2 “Random sampling and shuffling” describes two solutions:

- If the number of items to sort is small, then simply put all possible orderings in a table and select one ordering at random. In our case, with 5 browsers, the table would need 5! = 120 rows.

- “Algorithm P” which Knuth attributes to Moses and Oakford (1963), but is now known to have been anticipated by Fisher and Yates (1938) so it is now called the Fisher-Yates Shuffle.

Another solution, one I use when I need a random shuffle in a database or spreadsheet, is to add a new column, fill that column with random numbers and then sort by that column. This is very easy to implement in those environments. However, sorting is an O(N log N) operation where the Fisher-Yates algorithm is O(N), so you need to keep that in mind if performance is critical.

Microsoft used none of these well-known solutions in their random solution. Instead they fell for the well-known trap. What they did is sort the array, but with a custom-defined comparison function or “comparator”. JavaScript, like many other programming languages, allows a custom comparator function to be specified. In the case of JavaScript, this function takes two indexes into the value array and returns a value which is:

- <0 if the value at the first index should be sorted before the value at the second index

- 0 if the values at the first index and the second index are equal, which is to say you are indifferent as to what order they are sorted

- >0 if the value at the first index should be sorted after the value at the second index

This is a very flexible approach, and allows the programmer to handle all sorts of sorting tasks, from making case-insensitive sorts to defining locale-specific collation orders, etc..

In this case Microsoft gave the following comparison function:

function RandomSort (a,b)

{

return (0.5 - Math.random());

}

Since Math.random() should return a random number chosen uniformly between 0 and 1, the RandomSort() function will return a random value between -0.5 and 0.5. If you know anything about sorting, you can see the problem here. Sorting requires a self-consistent definition of ordering. The following assertions must be true if sorting is to make any sense at all:

- If a<b then b>a

- If a>b then b<a

- If a=b then b=a

- if a<b and b<c then a<c

- If a>b and b>c then a>c

- If a=b and b=c then a=c

All of these statements are violated by the Microsoft comparison function. Since the comparison function returns random results, a sort routine that depends on any of these logical implications would receive inconsistent information regarding the progress of the sort. Given that, the fact that the results were non-random is hardly surprising. Depending on the exact search algorithm used, it may just do a few exchanges operations and then prematurely stop. Or, it could be worse. It could lead to an infinite loop.

Fixing the Microsoft Shuffle

The simplest approach is to adopt a well-known and respected algorithm like the Fisher-Yates Shuffle, which has been known since 1938. I tested with that algorithm, using a JavaScript implementation taken from the Fisher-Yates Wikpedia page, with the following results for 10,000 iterations in Internet Explorer:

| Position | I.E. | Firefox | Opera | Chrome | Safari |

|---|---|---|---|---|---|

| 1 | 2023 | 1996 | 2007 | 1944 | 2030 |

| 2 | 1906 | 2052 | 1986 | 2036 | 2020 |

| 3 | 2023 | 1988 | 1981 | 1984 | 2024 |

| 4 | 2065 | 1985 | 1934 | 2019 | 1997 |

| 5 | 1983 | 1979 | 2092 | 2017 | 1929 |

| Position | I.E. | Firefox | Opera | Chrome | Safari |

|---|---|---|---|---|---|

| 1 | 0.2023 | 0.1996 | 0.2007 | 0.1944 | 0.2030 |

| 2 | 0.1906 | 0.2052 | 0.1986 | 0.2036 | 0.2020 |

| 3 | 0.2023 | 0.1988 | 0.1981 | 0.1984 | 0.2024 |

| 4 | 0.2065 | 0.1985 | 0.1934 | 0.2019 | 0.1997 |

| 5 | 0.1983 | 0.1979 | 0.2092 | 0.2017 | 0.1929 |

Applying Pearson’s Chi-square test we see:

X-squared = 21.814, df = 16, p-value = 0.1493

In other words, these results are not significantly different than a truly random distribution of positions. This is good. This is what we want to see.

Here it is, in graphical form, to the same scale as the “Microsoft Shuffle” chart earlier:

Summary

The lesson here is that getting randomness on a computer cannot be left to chance. You cannot just throw Math.random() at a problem and stir the pot, and expect good results. Random is not the same as being casual. Getting random results on a deterministic computer is one of the hardest things you can do with a computer and requires deliberate effort, including avoiding known traps. But it also requires testing. Where serious money is on the line, such as with online gambling sites, random number generators and shuffling algorithms are audited, tested and subject to inspection. I suspect that the stakes involved in the browser market are no less significant. Although I commend DSL.sk for finding this issue in the first place, I am astonished that the bug got as far as it did. This should have been caught far earlier, by Microsoft, before this ballot screen was ever made public. And if the EC is not already demanding a periodic audit of the aggregate browser presentation orderings, I think that would be a prudent thing to do.

If anyone is interested, you can take a look at the file I used for running the tests. You type in an iteration count and press the execute button. After a (hopefully) short delay you will get a table of results, using the Microsoft Shuffle as well as the Fisher-Yates Shuffle. With 10,000 iterations you will get results in around 5 seconds. Since all execution is in the browser, use larger numbers at your own risk. At some large value you will presumably run out of memory, time out, hang, or otherwise get an unsatisfactory experience.

Actually, Fisher-Yates might not be the simplest. Another approach that works well in languages with associative arrays is to do this:

1. Make an associative array whose keys are the elements you wish to shuffle, and whose values are random numbers.

2. Sort the keys by their values.

My Javascript is rusty, so I’ll give an example in Perl, and leave it to someone else to show how it would be done in Javascript:

sub randomize_array

{

my %hash = map {$_ => rand} @_;

return sort {$hash{$a} $hash{$b}} keys %hash;

}

That takes an array, and returns a shuffled version of that array.

@moose, that is essentially the solution I described as an “acceptable solution that is slower than necessary”. That’s how an end user can do a random shuffle in a spreadsheet: insert a new column parallel to your data column(s). Copy the formula “=rand()” into each cell in that column. Select the entire range, including your existing data and the data in the new column. Sort, using the new random column as your sort key. Same concept.

But the interesting thing is that doing a sort is not necessary at all to do a random shuffle. Of course, with only 5 elements, the fact that sorting is asymptotically slower than Fisher-Yates isn’t going to matter. Your solution would work just fine at that scale.

“Never attribute to malice that which can be adequately explained by stupidity.” –Hanlon’s razor

why do you want equal distribution rather than random? this screen will only appear to the user once, and will be completely random. also, to determine that your results are in fact right, you are going to need 10,000 iterations of your test that takes 10,000 sorted rankings. this will show you how you don’t understand what “random” means.

In fact, your first p-value is even lower… 2.2E-16 is the lowest result R’s chisq.test can produce :P

@Darren, In this post I’m not getting into the economic or competitive reasons of “why” a random ordering is desired here. Let’s just take it from the point where Microsoft and the EC agreed to settle the outstanding anti-trust suit, and where as part of the settlement Microsoft agreed to randomize the the browser ballot screen. So take that as a requirement given to a programmer. As was noted originally by DSL.sk, and confirmed by my tests, the delivered ballot screen is not random.

The statistical analysis here is pretty basic. You don’t need to do 10,000 repetitions of my test to show — to a very high confidence level — that it is not random. The p-value itself showed that the distribution was so uneven that the chances were 1 in 50,000,000,000,000,000 that these results were drawn from a process that was actually producing random orderings. Analysis of the algorithm shows why the results are non-random.

Actually, the tag-and-sort algorithm is not a perfect shuffle, whereas Fisher-Yates is. See http://okmij.org/ftp/Haskell/perfect-shuffle.txt for an explanation.

What’s more interesting is the question: does it benefit Microsoft? It would appear to actually work against them, since competing browsers appear more often at the front of the list.

Seems to me that would perfectly satisfy the aims of people behind the Browser Choice List. It seems like they had a hidden goal of reducing Microsoft’s presence, and this will do it, ever so slightly.

I think by “equal distribution” he means an “equal probability of appearing in a given spot” which is what you’d expect if a number was truly random.

@Antii, you are certainly correct. But I think that if you have a small number of elements to shuffle, you should avoid the kind of key collisions that would cause a problem. And at that point the performance liabilities would also tend to lead one away from a sort-dependent solution and toward Fisher-Yates.

It would be interesting to see at what point the key collisions cause a problem. How many elements do you need for it to fail the chi-square test with p-value < 1% ?

An alternate shuffle, that you could compute in N time, assuming all elements are already in an array:

for each element in the array:

generate a random value between [1 and size of array] (assumption = 1 is the index of the first value)

swap current element with the element in the randomly generated position

Every element in the array has at least 1 chance to get moved to a random position.

Many years ago (early 80’s) I saw in some magazine an elegant and fast O(N) solution for doing this, as follows:

for (i=0; i<N; i++) A[i] = i;

for (i=0; i<N; i++) swap( A[i], A[ rand(N) ] );

I never analysed the result to see if mathematically it is identical to a true random permutation, but intuitively it looks that way and definitely was good enough for the games I wrote back then… :-)

Neither script seems to use more memory for more iterations. Running the test in firefox on my machine, there was no difference in memory usage between 50,000 iterations and 5,000,000 anyway. It just took a lot longer to finish.

Using Chrome “4.0.249.89 unknown (38071)” which is latest non-dev according to the About screen, your script executes, displays the results, and then immediately flashes back to the input, blanking the results. It’s rather inconvenient.

Is there something I’m doing wrong?

Nice article and analysis – it’s always easy to forget about the theory behind the code once you’ve been though years of rushed projects.

For the third possible return case in the compare function possibilities section above:

■<0 if the value at the first index should be sorted before the value at the second index

■0″ instead of the first and third both being “<0" cases.

@Carlos, good catch. I’ll correct.

@Matt, I don’t have Chrome installed. But it could be error in my script, or compatibility issue. Anyone else seeing this behavior?

amnon: you solution is basically Fisher-Yates, except for a mistake in the rand function (you remembered it incorrectly). Compare with the solution on the Wikipedia page.

Just curious, what’s the third good solution?

I don’t see how this code could cause an infinite loop. Please elaborate?

When localizing educational computer games from a US manufacturer for the German market, I’ve seen my fair share of failed shuffle implementations. The typical implementation was

for (i=0; i<n; i++) {

r = random(n);

swap(a[i], a[r]);

}

This is very similar to Fisher-Yates but doesn't result in an equal distribution.

The problem in Chrome is that you’re submitting the form, which causes the page to re-render. Easiest workaround is to change the button from “type=submit” to “type=button”.

That’s not the naive solution. That’s just … wrong. I don’t think anyone would expect the outcome to be random if you sort by a weird changing comparator function. That outcome will depend on the sorting algorithm used and just be weird.

The naive solution would be to assign each element a random element in the output array. I think that would work too, but be slower and involve floating point divisions and stuff that better algorithms can dispose of.

Solutions to creating software answer are flawed, the software is running on hardware incapable of producing a random number. No matter how complex the software it’s limited to the incapability/capability of the hardware.

You need to use a non random hardware input such as giga readings from a radioactive source – {which is a random scientific event’.

Michael,

@Brandt, thanks. I changed the form. Hopefully it works better with Chrome. Would be interest in how the results are there, and Opera and Safari as well.

@Nick, do you see the problem with that algorithm? The items at the end the array can be swapped out less frequently, though there are more opportunities for values to be swapped in there. In other words, elements in the front have a greater chance of being put back in the front from later exchanges. So it is biased with respect to the original sort order.

@Steve, for example a bubble sort could easily end up in an infinite loop. Remember, that sort will continue making passes over the entire array until everything is pairwise ordered. So with a random comparator, the chance of any pass finding all elements sorted is 1 in 2^N, regardless of the number of passes previously made. OK. Not infinite, but can get quite long quite fast. With a 100 element array, I doubt it would finish in our lifetime. Of course, one hopes that bubble sorts would not be used in Microsoft code. But one also would have thought that a broken random shuffle algorithm would not be used either.

@Tim — Re: the third good solution… That is simply bad editing on my part. I was going to go into the original Yates-Fisher versus modern variations. But I never got there. I’ll clean that up.

@Albert

You’re assuming that the last position on the list is the least desirable; unfortunately you seem to have missed out on an important lesson here.

You mix up the concepts. The distribution of browsers is random but non-uniform. They are completely different things. I can even be pedantic and say EU only required randomness, not a uniform distribution, but I don’t have access to the agreement between EU and MS and therefore will not say that.

Nick, what is your evidence that swapping(i, rand(n)) for i = 0..n doesn’t make for equal distribution? Can you prove it with test runs? Every element is given a shot and being swapped with any other element at least once. Some will be swapped multiple times, but that’s ok, since everything is symmetrical.

What would be problematic is if one swapped (rand(n), rand(n)) n times, which is what I saw people do when I used to ask this as an interview question.

@Darren Kopp: If the order didn’t matter, you’d just show the same order to every user and be done with it. Presumably the order is considered to exert some influence, thus they want each browser to fill each spot a roughly equal number of times in the grand scheme of things, so that the differences even out overall. Also, there’s not necessarily only one spot that’s best.

“Where serious money is on the line, such as with online gambling sites, random number generators and shuffling algorithms are audited, tested and subject to inspection.”

Well real gambling sites should use the dice-o-matic. That’s real random :-)

http://www.youtube.com/watch?v=7n8LNxGbZbs Amazing…

It is possible to use solution 1 (n!) without computing all possibilities using a recursive-type algorithm. Here is a python illustration of what this might look like (in c it is done better recursively):

inputs:

A=

r=

m=

while m > 1:

m = m – 1

yeild A.pop( r / m! )

r = r % m!

yeild A.pop(r)

yield A.pop()

Tom, regarding why swapping (i, rand(n)) for i = 0..n isn’t a good solution: Consider how this algorithm would run on a set of three items. There are 6 possible permutations of 3 elements, and the desired goal is a uniform distribution – each permutation having equally likely probability.

Each iteration through the loop there are 3 possible outcomes, each equally likely. And this is done 3 times, so you have 3^3 = 27 different paths, each with a probability of 1/27. Now count how many of those paths lead to each permutation. If the distribution is uniform then there should be the same number of paths to each permutation, but there is no way to divide 27 by 6 evenly, so some permutations will have more paths than others, and thus the distribution will not be uniform.

Note that decreasing the range of the random() by 1 each iteration solves this particular problem because there will be N! paths (instead of N^N), and the number of permutations is N! – each permutation has exactly one path (and all paths are equally likely).

@Sinetheta: The algorithm is flawed, but in this application, who cares. The concept of randomness in this application is to prevent bias towards Microsoft’s own browser and nothing more.

You write “Depending on the exact search algorithm used, it could have done a few exchanges operations and then prematurely stopped. It could have been worse. It could have lead to an infinite loop.”

To be precise, ECMA 262 (the Javascript specification) says that if a non-stable comparison function is used, then the results are implementation-defined. And the Microsoft implementation (at least of JScript) specifies that a non-stable comparison function *will* yield a random order. http://msdn.microsoft.com/en-us/library/k4h76zbx(VS.85).aspx

So: no infinite loop. (but I think they should rewrite their specification to say “will yield an unspecified order”.)

You can do it O(1), also. Just create a permutation generator which permutes the set of array indices. This is easiest and most efficient when dealing with sets with a power-of-2 order.

I use this to “shuffle” records (DNS, etc) with an iterator that uses a callback much like at issue here. The iterator simply uses a counter, i. For i = 1..N it just returns array[permute(i)] at each step. By the time i is N you’ve returned each and every record, but out-of-order. No intermediate step was necessary. permute(i) is O(1).

How do you create a permutation generator? You can create a simple 2^N-bit generator using a single random seed in combination with exclusive-ors, shifts, and optionally substitution boxes (those operations simply “mix” the bits; other operations might not guarantee a one-to-one mapping of input to output). There are other ways to do it, I’m sure, but this is what I’m most familiar with. This is the basis for many cryptographic algorithms. If all you wish to achieve is some degree of statistically random distribution, you don’t need to be an expert. Just hack together an algorithm and test to verify the strength of the distribution. Indeed, if you’re doing anything other than a straight Fisher-Yates shuffle with a known good PRNG, then you want to test anyhow.

Of course, O(1) might still take more wall time than the other algorithms for smaller sets. But even so, I typically use it because it doesn’t require any extra data structures other than two integers for the seed and counter. The simplicity extends to the actual code, as well, which I find most important. Plus, there are many occasions when you wish to randomize a set but then only retrieve a subset. With this method, there’s no wasted work.

A simpler solution is this two-step process.

1. Microsoft, the browser makers, and the EC to sit down together ONCE in a room, draw the browser names from a hat and write down the order in which they appear.

2. Then when those browsers are presented to the user, they are shown always in the same order as chosen in 1), but with a randomly-added shift applied. This involves a single, simple random() call that is hard to get wrong.

For example, if the order the browser names were drawn from the hat was Safari, Explorer, Firefox, Opera, the user would only ever see one of the following lists presented on the screen:

Safari, Explorer, Firefox, Opera

Explorer, Firefox, Opera, Safari

Firefox, Opera, Safari, Explorer

Opera, Safari, Explorer, Firefox

It’s hard to screw up the implementation, and it’s demonstrably fair.

@Anon 4:24 – You hit the nail in the head. I doubt any of the EU or MS officials or programmers know the difference. Being pedantic like you however, I’d reverse the requirement and say that EU should have required just a uniform distribution. In my humble opinion, “randomness” is NOT required. Here’s an example:

Imagine this scenario: The computer upon Windows installation contacts a MS site that uses a global installation counter – each new installation would increase the counter from N to (N+1) and then present a browser order according to (N modulo 5!). This is a totally deterministic process, with no randomness at all (statistical tests for randomness would fail because of the autocorrelation), which however would lead to perfect uniformity: at any given time instant, each browser would have been placed in each of the 5 positions with a percentage of precisely 20%, as required !!!

The same kind of uniformity could be produced by using the installation serial number (licence) of Windows: since the licence key space is well-defined, the order of browsers could be also well (uniformly) defined from the serial number itself. There might be a problem with volume licences, but VLKs are a small percentage of total installations.

However, on a single offline computer, with no knowledge of history (what ballot was presented globally) or without a licence key, programmers have to resort to mathematics in order to produce uniform (not necessarily random) distributions. This is an application of the law of large numbers: if the ballot is uniform on the same computer, it will be uniform globally.

In conclusion, the only requirement should be that the browser ballot should produce uniform results. If the ballot operation is going to be performed online, this is an easy task. Otherwise, the only way to uniformity is through randomness – and as the fine articles have shown, this is not a trivial problem.

You know you talk only about one set of random positions.

But besides that i think there is another random part in this game

(well statistics is origin is gaming math after-all)..

The fact that also unknowing people (should) choose randomly.

However people more often these days look at how much bling bling valeu something has.

And simply this results in a graphical evaluation of icons.

Yep i know its not great, but that psychology at work, and so i think Firefox is better off…

To get a purely random option for a web browser people should gues a number and get a unknown browser based on that number. At least that would be the correct way. Or for example create a random number based on mouse movement.

Anyway i think you and I know that any math based shuffle technique is always a pseudo random generator, unless you get some external random data, like quantum noice random engines. Well some CPU’s these days have such a function, but not all of the home pc have such options.

Anyway i think the best result of this EU is that know Microsoft creates their backend applications to work with Firefox and some others, you no longer are forced to use IExplorer because your exchange servers only works with it, no these days their new server product are (finaly) keeping respect to other web standards dont get me wrong microsoft isnt against standards, but most of them they copyright (but not all)) Now as a result finally Microsoft has stopped doing this on the web browser market.

Which is pretty nice if your a web designer, and cool if your a consumer who doesnt like to be binded to Iexplorer.

(now if only SAP went to firefox too… and some others..)

They should just put the list in a top-right to bottom-left diagonal orientation. This is how simultaneous lead billing problems are solved in Hollywood.

Dave,

I could not have asked for a better explanation of why that doesn’t work! Apparently, I should not hire myself. I also confirmed by experiment that the distribution was not even close to uniform for larger orders.

This javascript seems to work well after experimentation. It uses your idea of decreasing the random set on each iteration.

function shuffleArrayInPlace(items) {

for (var i = items.length; i > 1; ) {

var j = Math.floor(Math.random() * i);

var t = items[–i];

items[i] = items[j];

items[j] = t;

}

}

@Rob Weir

You say you used the Fisher-Yates implementation from Wikipedia… but the Javascript implementation on that page has a comment in the code stating that the way the random number is derived will introduce modulo bias into the results. And looking at your code, I see the same comment. You couldn’t have missed it while copying and pasting, so I’m curious to know how you missed this caveat in your article since the whole point of the article was to lay bare (inadvertent) bias.

You make a very good point, but I think that you miss your own point in the summary. This issue isn’t about throwing a random number at something, its about sorting with a variable comparator. You point to this with an example of a database, just assign a random number to each element and then sort on that number rather than generating a random number in the comparator. For all the fuss on slashdot, this really is just a simple error, but one that should have been picked up in any code review. Great article though.

@Anon, You need to worry about modulo bias when the number of possible combinations of your elements is an appreciable fraction of the number of random numbers your generator can produce. In this case we have only 5! = 120 possible orderings, so we’re fine, presuming that Internet Explorer has a non-pathological PRNG. The test of the results with the Fisher-Yates Shuffle suggests this is the case. But if you have a larger array, say representing a deck of 52 cards, then modulo bias can be more of a problem. Note that this is not a specific weakness of the shuffling algorithm. It occurs with the other shuffling algorithms as well. It is simply a statement that the PRNG is inadequate to represent the number of possible states in the problem.

The agreement that was agreed to with the EU just says that the top 5 browsers should appear “randomly”. So the requirement could have been more precise. But still, that’s not an excuse for such terrible code.

For policy geeks, here is the agreement: http://ec.europa.eu/competition/antitrust/cases/decisions/39530/en.pdf

“The lesson here is that getting randomness on a computer cannot be left to chance.”

Oh ho, Mr. Algorithm Man, you just made my day.

For the lazy among us could you show how to 1) assign the data to a variable and 2) how to run the chisq.test() against it in R?

@David, You do the chi-square test on the raw counts. You first get the table into a CSV file on disk. So headers in quotes, separated by comma, and the rows, with numbers separated by comas. You don’t want the “Position” column. You just want the counts.

Then, in R, try this:

data <- read.csv("/path/data.csv") attach(data) chisq.test(data)

FYI, Excel has a Chi-Squared test. Look up CHITEST in the help. I believe it returns the P-value.

I can prove that the qsort() function I have on hand as source code terminates on an ill-behaved comparison function. But yeah order by random() isn’t going to be very uniform for it.

It is ironic that Rob was able to analyze the bug and the solution so thoroughly because the code was in an open source javascript file.

If the code had been developed in the first place in typical open source fashion, the bug may have never made it into production.

By the way, I think another solution that only requires generating one random number is to use the factoradic representation of the permutations. See Wikipedia entry for Factoradic in the subsection on permutations. It is an algorithm for finding the Nth permutation given an integer N. That would let you generate a random integer N from 0 to 119 and compute from that the Nth permutation of the set {0, 1, 2, 3, 4}. That is elegant in that you only need one random number, but if you squint the right way at the algorithm for finding the Nth permutation it looks an awful lot like what you go through for Fisher-Yates.

Actually, with only five items to shuffle, just about any way of doing other than what Microsoft did would work. You could even generate one random number and compute it mod 5, mod 4, mod 3, and mod 2, to determine which of the five to choose first, then which of the remaining 4, remaining 3, remaining 2, and the one that’s left.

IE, with its “world’s most widely used browser” culmination, is most likely to be placed last, and it is kept away from the long wall of Opera descriptive text, while most likely to be next to the short and unthreatening (also in market terms) Safari. UI designers will have tested enough people to find out what they are most likely to choose and how the choice can be influenced; the “naive” algorithm has taken advantage of this while providing an excuse to the powers that saw political advantage in forcing Microsoft to accommodate for its competitors.

The only naive thing here is the geek so overwhelmed by his understanding of first-year statistics and computer science that he forgets that business is the application of technology to profit, not the perfection (by some Platonic ideal, I assume) of technology itself. You are being forced to do something you don’t want to do and you can afford the smartest team on your side — why wouldn’t you employ them to resist this force?

(Reading the MSDN documentation, of course, a Microsoft JScript sort abused like this returns items in a random order. Since reading the documentation was the first thing that came to mind for me, before performing a statistical test on the implementation and making proud guesses as to what went “wrong”, I couldn’t help but give wry smile at in the evident sense of humour that the ballot developer has.)

Nice article, but there is a major mistake!

In the case of this problem. A unique user/pc will get this script to run once. In order to repeat your test, you have to get 10,000 machines at 10,000 different time spread over some time. By doing so, each random call will be “independent” of the other calls. In you case, each call is somehow dependent on the previous CPU clock , instruction … thus never random.

In the real world case, where the Microsoft script is running, You will get real random distribution.

So my suggestion to you is this:

Add the script to this page, and let it RUN and report back the result . After 10,000 users visiting this page, you can then POST the new results. I would love to see them. You can limit one run per IP.

Regards,

Joseph

AloSmart.Com

Although I am a scientist I am not a statistician and a very mediocre programmer. Can someone describe for me in laymans terms why the solution Microsoft’s programmer used doesn’t work? To my eye it seems that the code above should produce a nice array of random numbers which could subsequently have been sorted.

In addition, why did the programmer use “0.5 – rand()” instead of just “rand()”?

Thanks!

Bill – Isn’t your algorithm O(n)? It seems that you do one step for each of n elements, which requires at least n operations. If your algorithm was O(1), it would take the same amount of time to shuffle 5 elements as 5 million elements, right?

Not meaning to be a jerk, just checking to see that I understand.

Interestingly there was very little bias for a 10k test using Chrome 4.1.249.1017

Position I.E. Firefox Opera Chrome Safari

1 2446 2477 1646 2195 1236

2 2462 2439 1658 2207 1234

3 1879 1977 1697 1885 2562

4 1776 1689 2205 1902 2428

5 1437 1418 2794 1811 2540

If I am not wrong… On The ability to generate random numbers… UNIX has a /dev/rand something that is supposed to be as old as linux and it picks up random electrical disturbances to generate random numbers… Why/do they? we have something like that in Windows?? That’d be truly random no?

PHP shuffle()

Can someone please tell me how PHP implements shuffle()? I can find plenty of hits explaining how to use it (umm, it couldn’t be simpler really) but none explaining what lies behind it? But I’m inclined to bet it’s not based on Fisher-Yates. So what exactly IS it?

I’m surprised no one has checked which position is actually the most favorable. Everyone has assumed it’s the first slot. Are there behavioral studies to support that assumption? What if disinterested users typically click the last option?

User generated randomness!

I think the simplest solution in this case to achieve effective randomness and a uniform distribution is to just use system time to loop through all 120 possibilities. The screen would just change every second and since no-one can predict when a user is going to request the webpage: voila you have perfect randomness plus uniform distribution. Just not when you use a script, but then that wasn’t really the question…

@Mits: Sorry for being pedantic, too, but: I would agree that a truly random distribution is PROBABLY not required — but I believe it is harder to take a non-random with some bias and then prove that this bias does not matter, than just take a uniform random distribution which cannot by definition have any bias. For example, if we follow your proposal and just enumerate all the possible permutations and present them one-by-one, the browser in the first position stays there for quite some while. That would e.g. enable an attack where somebody who hates Firefox could issue a lot of simultaneous requests to “push Firefox off the first position”. Okay, maybe this is a bad example, but it illustrates the kind of things you have to think about when deliberately NOT taking a random distribution.

@Bill:

I doubt that O(1) calls to a truly random “n-element permutation generator” are really faster than O(n) calls to a random number generator. Your “permutation generator” would have to do something comparable to Fisher-Yates inside, otherwise it is presumably not correct.

I can solve the Travelling Salesman Problem in O(1), given a solve_tsp() function.

Also, you cannot “verify” the randomness of a process by running statistical tests, only fail to falsify it. “Testing can never show the absence of bugs, only their presence” (Dijkstra). If the randomness of that process is critical to the security of your application, I would hesitate to use some “hacked-together algorithm” using some swaps. Please use some tested-and-proven standard implementation.

Considering most people who view the browser choice screen will just want to keep browsing “like they used to” and will, after speaking to their technical friends, make sure that IE is still installed, it’s all a bit academic really. But great article and analysis.

(source: my own experiences over the last couple of days)

@Steve Weissman, I’m very aware of the business, legal and political context this ballot screen is in. Certainly, it does not require “platonic perfection”. I never suggested it did . That’s a red herring. But I doubt it is satisfactory that some browsers are put in the first place twice as often as others. And this just isn’t about Internet Explorer. Look at the disparity between Chrome and Safari as well. In the end, the degree this does or does not meet Microsoft’s business, legal and political needs will be illustrated by how quickly they fix the bug, now that they are aware of it. Come back in a few days and tell me that this was not important enough to warrant correction.

@Dorian, The problem appears to be the way the custom comparator function interacts with their sort() routine. Without seeing the source code for Internet Explorer, it is hard to say more. But typically sort() routines are written to give fast results given a fully-ordered comparator function. How they behave when given a unstable comparator function is going to be implementation dependent.

@Joseph, are you suggesting that these results are not uniform because of non-randomness in the PRNG caused by the tests not being run on a single machine and not “spread out over some time” and that if I used 10,00 different machines with 10,000 different IP addresses I’d get truly random results? If that were true, then my tests would show biased results when running the Fisher-Yates algorithm, since that relies on calls to Math.random() as well. But that algorithm works fine. So this suggests the problem is in the choice of algorithm not a problem in the PRNG.

Lucian, Microsoft’s documentation doesn’t say that their sort function shuffles if the compare function isn’t consistent; that’s community-contributed content akin to the comments on PHP’s method documentation pages, not normative statements.

Awesome job with your use of R and explanation of sorting algorithms. What you failed to explain, however, is the relevance of your analysis to the end-user’s choice. This isn’t the Monty-Hall problem; the browser isn’t hidden behind the door. Presumably, the end-user is acquainted with one or more of the choices, and will select their *previously-chosen* preferred-browser. Where’s the evidence that browser share will be affected by ordering? Do you have *any*? Meh, this barely rises to the level of tempest in a teapot.

@John, It was not the purpose of this post to analyze the reasons for an unbiased browser ballot screen. I’m taking that as a requirement which a programmer needs to implement in their code. In this case the requirement comes from an agreement made between Microsoft and the EU to settle their anti-trust case. It would be a fascinating discussion, I am sure, to debate why other browser vendors insisted on having a randomized ballot, and why Microsoft agreed to do this, but that would only make a too-long post even longer. But if you want to go down that route, I’d start with a read of Jakob Nielsen’s “The Power of Defaults”.

A couple of years ago, I saw a research showing, that when man opens a newspaper, he is attracted mostly by the titles of the right upper part of the newspaper (with probability of 36%; where normally it should be 25%).

And we must also take the fact, that user visiting BrowserChoice can also scroll to the right (and then the first places are not visible), into account…

Am I suppose to care about this issue?When someone makes me an offer, I don’t know for you, but for me I have other things in mind than the algorithm used in order to present me a choice. I am not a normal tech, not nerd enough I think…

Rob – you said you replicated DSL’s results, and then asked “is that statistically significant?”. You shouldn’t have needed to run your chi-squared test!

It’s reasonably well known in the world of statistics that someone ran a test and concluded that “Israeli fighter pilots have more girl children than boys”. Whoops. That’s what past data says. Only if future data also agrees can you decide that the first study meant anything (and if the second study *does* produce the *same* result, then you can conclude with a high degree of certainty that you have picked up something of significance.

I don’t know what the chances were of DSL producing the results they did were. But the chances of your test coming up with the *same* results by chance are pretty miniscule. Just because you’ve won the lottery once, it doesn’t increase your chances of doing it again. The mere fact that you could reproduce DSL’s results said that MS’s “random order” wasn’t random, without having to run any other tests at all.

(though proving it for sure with chi-squared wasn’t a bad idea…)

Cheers,

Wol

@Wol, if the DSL.sk test was based on only 10 iterations, and my test was based on only 10 iterations, then if the results matched, you might still doubt the significance. When I first saw the DSL.sk results I was very skeptical. It looked very much a like they were cherry picking their results. That’s what lead me to try to reproduce it. If you have a 5×5 table of outcomes, the chances of all of them being 2,000 is a less than any given one of them being 2,000. Ditto for having them all be in an arbitrary range, say 1800-2200. My initial concern was that, short of doing a Chi-square test our intuition fails us. There is a hindsight bias if you run the test and then decide which combinations should be tested for significance. The Chi-square test neatly takes this into account, looking at the whole of the table.

So I set out to show that the results indeed were random, but when I ran the tests, I found that the DSL.sk observations were accurate, and that the results were indeed biased. Thus this blog post.

Some things to try, if anyone feels like pursing this further:

1) Repeat the same tests, on Internet Explorer, but instead of using Math.random(), use a source of true random numbers, such as from ww.randomnumbers.info. You probably need around 100,000 randum numbers for 10,000 iterations of the test. Put them into a Javascript array and then iterate through them. Demonstrate that the algorithm used is flawed even when given that as input.

2) Or alternatively, dump out the pseudo random numbers actually used by I.E. and run them through the Diehard test battery: http://www.phy.duke.edu/~rgb/General/dieharder.php

A thought on the comparison function.

The effect of this comparison function is rather mysterious given that the sorting algorithm used by javascript differs for various browsers. This can have rather nasty effects. Any algorithm that checks whether the array is sorted before terminating is quite likely to go into a loop with nondeterministic running time, i.e. there exist incredibly improbably but never terminating sequences of computation. This can happen with say bubble sort checking that no swap has been made (http://en.wikipedia.org/wiki/Bubble_sort#Pseudocode_implementation).

But is it possible for this to run just fine? I am somewhat confident that the sequence of comparisons that quicksort would make would satisfy rules 1-6. (To handwave, this is because no pair of elements is compared twice, and once a pair has been separated by a pivot they can never be compared.) It is not necessarily going to give a random permuatation. If the pivot is selected a certain way, say always from the back of the list, the positions of that element is going to follow a binomial distribution within its range. It will appear after k elements where k is the number of things the comparison sort said it was greater than in a range containing n elements. (This is the easiest to see with the first pivot.)

Now here is the stumper, well at least it stumped me. If quicksort is done with uniform random pivot selection, do we have a “good” random permutation algorithm? My suspicion is that we do, but I would love to know anybody else’s thoughts on this.

Houston, we’ve got a problem: http://www.javascriptkit.com/javatutors/arraysort.shtml

Scroll to the bottom…

So what if the results are rigged to show IE on the right side on a horizontal list of 5 or 6 items? Which side will people pick most often? The answer is the one on the right. See this link here: http://www.newsweek.com/id/210194

It seems that microsoft was using some carefully planned algorithms to benefit them, at least so it would seem in a small list where the majority of right-handers will pick the items on the right side of the screen, rather than the better browsers on the left. Luckily, I use my left hand, so I am immune until such a time as they can figure out my handedness. This would be my guess as to why MS would want to show up last on the list, especially if there is a menu on a horizontal plane. :) Cool, huh?

What is really instructive here is that Microsoft — some of the “best” programmers on earch, according to their proponents — are too stupid to compute a random permutation. And, apparently, too arrogant to even look up the correct algorithm.

Lots of Microsoft bugs are the result of this arrogance. This kind of carelessness and arrogance is, I think, the major reason why all Microsoft products are such junk. They are successfull — in a profit sense — only because of a combination of illegal marketing activities, abuse of the leagl and patent system, and an almost unlimited ability to spread lie-based propaganda. It only works because the general public is so terribly ignorant of science and mathematics.

@Renee Marie Jones

Ridiculous comment. The method in question was “not invented here”

as seen from Daniel Elstner’s post:

“Houston, we’ve got a problem: http://www.javascriptkit.com/javatutors/arraysort.shtml

Scroll to the bottom…”

Yes, Microsoft just went with a recommended method, and anti-Microsoft zealots are no less vulnerable, including the ones handing out blanket condemnations (which would probably be the whole lot).

There have been a few suggestions that my test is biased because it requests random numbers in a tight loop, and all on one machine, rather than on different machines, running at different times. So I modified my test to dump out a list of all of the random numbers retrieved in the calls to Math.random() during a 10,000 iteration run. You are welcome to download then and apply whatever tests you wish to them. I don’t see anything here out of the ordinary.

The interesting thing was how many times Math.random() was actually called. It was called 272,991 times for 10,000 iterations of the Microsoft Shuffle. So that tells us that every sort operation of the 5 element array required around 27 calls to their comparator function. Interesting. But as anyone who has seen a magician do a card trick knows, the intensity of the shuffle says nothing about the randomness of the results.

@Rob: Is it always the same number of calls? I’d expect the number of calls to the comparison function to be random as well.

Also, the whole discussion about bias in the analysis completely misses the point. This is broken code, plain and simple. An API is being misused, the result is undefined behavior. It’s purely by chance that they even got something that looks like a random permutation at all.

@Daniel, the number of calls varies, but averages out to close to 27.

@Bugstomper, there is nothing in the EcmaScript standard that requires the use of quicksort to implement the sort() function. So it is not a safe way to code a random shuffle in Javascript. But still that is interesting to know.

Thinking about Tim’s comment on quicksort, I came up with a slightly less hand-wavy argument why the Microsoft Shuffle should work ok if the sort algorithm is quicksort – You can write out the steps of any one execution of quicksort as a series of binary choices that progress through a binary tree of all possible runs. Since the algorithm never compares the same pair of elements twice, then making a random choice at each comparison could only have the effect of selecting one of the possible execution paths, with all being possible and all being equally likely. It would have the effect of choosing a permutation at random, sorting it with quicksort, and using the result to let you know what the initial random permutation was. It would require as many calls to random() as you have comparisons, which with quick_sort is O(n log(n)).

But paraphrasing Knuth, the above is only a proof of correctness, so we don’t know if it will work without testing. I coded up a variation of the Javascript quicksort code from http://en.literateprograms.org/Quicksort_(JavaScript) in which I added an argument to provide the comparison function, and added to the test here a copy of Microsoft Shuffle that call quick_sort(array, RandomSort) instead of array.sort(RandomSort).

I also added calculation of the time in each one, var startTime = (new Date).getTime(); before the loop that iterates the requested number of times, and outputting ((new Date).getTime() – startTime1) before the table of results.

The Microsoft Shuffle using quicksort produced the same statistically even distribution as the Fisher-Yates algorithm. The times were interesting. On my MacBook using Firefox 3.6 for 100,000 iterations the incorrect Microsoft Shuffle took 2.28 seconds, the quicksort Microsoft Shuffle took 4.61 seconds (but got correct results), and the Fisher-Yates one took just 0.185 seconds.

@Rob I would like to ask you one thing and that is that both the Javascript version which is incorrect and The fisher algorithm use Math.random(). Now you were able to empirically proove(using chi square) that fishers thingy gives a uniformly distributed permutation, but since Math.random() is behind them both its hard to see how even n(n-1)/2 shuffles of the javascript function would not produce a uniformly random result? I mean if Math.random() is the only important thing here then the fisher algorithm would also fail right?

I mean the empirical evaluation is fine but is there an analytical answer as to why the average distribution is not random in the MS version?

@Sid, Since we don’t have Microsoft’s Internet Explorer code to inspect, there is no way to know. Who knows what IE is really doing behind the scenes? It is possible that they are not even using a single sort routine. The could be branching to one routine for long arrays, another optimized for short arrays and maybe even some special logic to “unroll” the sort logic for very short arrays of 2-5 elements.

Firefox gives non-uniform output as well. Maybe we can look at the sort() implementation there? Anyone have a direct link to that source file?

@Bugstomper So does that not mean that the only explanation here is that there is something other than just the sort function being called… Its not just quick sort or any sort for that matter… Forget the incorrectness… This has just to do with a generating a random permutation of N elements. That java script function might have been defined

function randomresult(a,b){

return 0.5-Math,random();

}

for all we care…

And by calling O(NlogN) or O(N^2) calls to any comparison sort, the results should be uniformly random…

Some thing else is obviously wrong…

I’m sorry, but the pagelayout does not allow to increase the font-size in firefox with ctrl-+, and reading the text without scrolling left and right. That is far more annoying than a poorly done browser-choice – who need’s that?

Well – I would go for a simple solution: Pick a browser from 5 by random, from the rest pick one, pick one from 3, pick one from 2, and you’re done. 5 calls to random while waiting on a user reaction is nothing worth considering performance issues.

Scala:

val r = new java.util.Random

def sort (n : List[Int]) : List [Int] = n match {

case Nil => Nil

case _ => val x = r.nextInt (n.size)

n (x) :: sort (n - n(x))

}

for (i <- (1 to 10)) println (sort (List(1, 2, 3, 4, 5)).mkString ("\t"))

5 2 4 3 1

4 3 1 2 5

2 4 3 1 5

3 2 5 1 4

5 3 4 1 2

1 2 5 4 3

5 3 2 4 1

4 5 2 1 3

5 1 3 2 4

1 4 3 2 5

It’s just how you would do it by hand – like a lottery-machine works.

Rob, nice analysis. :)

Sadly, I can imagine making this kind of schoolboy error.

My implementation wouldn’t bother with any sorting:

* take a random item from the list of browsers B

* add it to the end of the displayed list.

* keep doing this till B is empty.

I think that’d work.

Nice work!

I can’t believe such an elementary mistake was committed, but I’m sure it will make a great example in an introductory algorithms course :-).

If in doubt, consult Knuth.

New glorious opportunity to run checks for “randomness”: Microsoft offers browser choices to Europeans (http://news.bbc.co.uk/2/hi/technology/8537763.stm) . The candidate alternative browsers now are 12 !!!

“The Opera, Firefox, Chrome, Safari and Internet Explorer browsers are randomly ordered on the first section of this screen. Another seven browsers, namely Sleipnir, Green Browser, Maxthon, Avant, Flock, K-meleon, and Slim, will be randomly ordered on the rest of the screen. They can be viewed by scrolling sideways. ”

I think one does not need a PhD to see why IE does not appear in the “second section of the screen”!

@Sid, the explanation as to what is going on with different sort algorithms can best be expressed with the old saying “Garbage in, garbage out.” The specification for the EcmaScript sort function is that the second argument is a function of two arguments, call them a and b, which will be passed two elements of the arry being sorted and must return -1, 0, 0r +1 depending on whether a comes before, is the same, or comes after b in the sort order that you want.

The call to Math.random violates the terms of the specification, giving the sort algorithm garbage information about whether a comes before or after b. In the case of quicksort, the algorithm happens to act on the results of each comparison and not only never revisits the same pair, it separates the elements of the pair into two sections of the array that are then sorted separately. The bogus result of the compare function jumbles things up but doesn’t come back to haunt the rest of the processing.

Contrast that to a sort algorithm that does something like this three element example, calling the elements of the array a, b, and c:

compare(a,b), and compare(b,c). If the results are a<b and bb and b>c then the answer is c b a, again with a 1/4 probability of getting this result

If the results are ac then you need to look at compare(a,c) and get either a c b or c b a each with 1/8 probability.

If the results are a>b and b<c then you also need to look at compare(a,c) and get either b c a or b a c, again each with 1/8 probability.

Result: Two of the six permutations each occur 1/4 of the time, the remaining four permutations each occur 1/8 of the time.

Yet a different sort algorithm that chooses what to compare in a different way would produce yet different inconsistent results that are not necessarily a uniform distribution even if compare() has a uniform random distribution.

Fwip, Martin:

It would be O(N) if you wanted to loop over every index, true. I said O(1) for the following reason: If you have 128 elements and want to take 3 uniformly at random, using Fisher-Yates you would have to first shuffle all 128 elements in O(N) time, and then take, say, the top 3 items (O(N) again). Using the generator method, the first step isn’t needed. The “shuffle” is O(1)–initializing the generator–though subsequently taking 3 items is indeed O(N). So, yes, very strictly speaking whatever you’re doing is asymptotically O(N) either way, but a better way of looking at it is that a particular Fisher-Yates-employing method is O(N) + O(M) while the method I described is O(1) + O(M). The distinction can be useful.

As for “hacked-together algorithm”: For my DNS library I implemented a 16-bit permutation generator (for ports and QIDs) using a Luby-Rackoff Feistel construction where the strength of the construction is provably a function of the strength of the mixing function F. F needn’t be invertible–you can use SHA-512 if desired–but I used TEA. So, you can use this method with similar confidence as a Fisher-Yates shuffle. (You wouldn’t want to use TEA to protect your passwords, but as the mixing function for a 16-bit block cipher you can bet the resulting distribution will be quite indistinguishable from random.)

But, for “randomizing” my resource record iterator (e.g. to contact nameservers in non-linear order, or to “shuffle” SRV records with the same priority) I indeed hacked together a naive permutation box. Horses for courses.

Sorry about the bolding in the previous comment. I didn’t realize that the blog software would interpret the inequalities with variable b as the HTML bold tag :). Where it turns bold the HTML ate that I said “if a<b and b>c then”

Anyway, I think I located the source code for Firefox’s Javascript sort function in

http://hg.mozilla.org/mozilla-central/file/11c0a0745d39/js/src/jsarray.cpp

It uses the Merge Sort algorithm, except it has an optimization that preprocesses the array by sorting each chunk of four elements using an insertion sort. The Merge Sort would then split the array into two chunks, of size 3 and 2 or 2 and 3 (I didn’t figure out which it is doing), sort each, and then merge the results.

The merging process has the same kind of uneveness as in the example at the end of my previous comment. The merge algorithm goes like this:

merge(array_left, array_right) {

while there are elements in both arrays {

compare the first element of array_left with the first element of array_right

pop the smaller one off its array and append it to the result

}

if any elements are left in one of the arrays append them to the end of the result

}

Let’s say that the sort is almost complete and it is ready for the final merge of array_left of length 2 containing elements a b, and array_right of length 3 containing c d e. There is a 1/4 chance that the next two random comparisons will select a and then b as the smaller, resulting in the output being a b c d e. Similarly there is a 1/8 chance of that resulting in a c b d e and a 1/8 chance it would result in a c d b e. I haven’t worked out all the probabilities of different results with the initial insertion sort and every way you get merges, but just from that you can see nonuniform results from the merge sort algorithm.

@Bugstomper, good work! I’m thinking that one could calculate the exact expected distribution. With a 5-element shuffle, the state space is small enough to do by hand. Root node is the initial state. Each call to the comparator generates one random number, and you get one child node for each of the two possibilities. The state space can’t be too big. On the other hand, I’m seeing 27 comparator calls per iteration in Internet Explorer….

@Stefan W., Your “lottery” approach is an interesting one. (@Commenter suggested essentially the same approach). Note that it does require an auxiliary array equal in size to the original one. So it is not doing an in-place shuffle. But that’s fine since we only have 5 elements. And it certainly meets the requirements.

To all, I just thought of another solution. Requires 2N space for N elements, but only one call to random. Distribution is uniform. Any guesses?

““Never attribute to malice that which can be adequately explained by stupidity.” –Hanlon’s razor”

It’s a fine sentiment, but somewhat balanced out by its obvious vulnerability to exploitation by the malicious.

Google’s very good at that. ‘What, us, want to expose all your contacts to everyone else? Nooo, noo, that was just a *mistake*. We’re sorry. We’ll fix it next month sometime. Maybe.”

The method Microsoft used is the one provided by the well-regarded javascript tutorial site, JavaScriptKit.com. See http://www.javascriptkit.com/javatutors/arraysort.shtml

As I write this on 3/1/2010 (they may change the code because of this now highly publicized flaw), here is the code used to perform a “random sort” of and array (basically passing in the “random” comparison function into a standard sorting function):

“Shuffling (randomizing) the order of an array

To randomize the order of the elements within an array, what we need is the body of our sortfunction to return a number that is randomly 0, irrespective to the relationship between “a” and “b”. The below will do the trick:

//Randomize the order of the array:

var myarray=[25, 8, “George”, “John”]

myarray.sort(function() {return 0.5 – Math.random()}) //Array elements now scrambled”

A slashdot comment indicated that many javascript coders consult javascriptkit.com for examples on how to code desired functions, and there’s a good chance that many websites are using this random sort implementation. Regardless of that, Microsoft should change the code anyway, so as not to be accused of anything.

@bugstomper I ll try to solve this and see… Thanks for that Insight apparently all comparison sorts are not going to be the same distribution!

@bugStomper Thanks for that not so trivial explanation. This is a good exercise to do… I will get on it. Apparently all comparison sorts are not going to yield the uniform distribution of permutations… Hmm definitely not intuitive…

In case anyone’s curious, mid-last-week I bashed out 1 million trial runs of the shuffle method used in the browser ballot with several different sorting algorithms and posted the distributions.

I just coded up javascript to run the test using the Microsoft Shuffle with a set of sorting algorithms, including the Firefox internal sort (your original Microsoft Shuffle test), Merge Sort, Merge Sort preprocessed with length four insertion sort like Mozilla does, Insertion Sort, Heap Sort, Quick Sort, and then the Fisher-Yates algorithm. When the page loads it generates all 120 permutations of {0,1,2,3,4} and sorts them all with every one of the sort functions to verify that I don’t have any bugs in the sort functions themselves.

While tracking down why my early results in the merge sort were different from the Mozilla sort I noticed another optimization that they have in their code. When going to do a merge, the merge sort has two arrays that have each been sorted already which are to be merged. Also the two arrays are actually two contiguous sections of the array being sorted. If the last element of the left array is not greater than the first element of the right array, then you don’t have to do any merging, the whole combined sequence of those two array segments is already in sorted order. When the comparison function is random it means that each merge step has a 50% chance of being skipped.

That explains how the sort is so skewed: The items that end up in the first partition at the start of the algorithm (IE and Firefox in this case) have a 50% chance of never being merged into the second half of the array right off the bat.

Adding that optimization to my Merge Sort code gives me close but not identical results to the Mozilla version.

My full results are too long to post in a comment. Rob, would you want me to email you the Javascript?

@Bugstomper. Interesting. One thing I tried last night was to increase the size of the array. So instead of doing a Microsoft shuffle of 5 elements, I did a Microsoft shuffle of 25. With the caveat that this is not at all what was used in the browser ballot, the results were much starker. There was a clear diagonal band of excess counts. The effect seems to be magnified with larger arrays. If you want to send the Javascript file, my contact info is on my about page.

@Isaac, very good. I think what we’re suspecting now is that some browsers make small optimizations to the textbook sort algorithms and that these optimizations can introduce additional ways for random comparators to lead to biased results.

@Pepe, are you telling me that someone is wrong on the internet? ;-) If you look around you’ll also see bad financial advice, bad medical advice, bad nutrition advice, etc. It is not surprising that there is bad programming advice out there was well. As I said in the post, this is a well-known error, a trap that novice programmers tend to fall into.

@Adam, I’ve never seen a team made up of perfect programmers. We all make mistakes, and rookies make more mistakes. What separates high quality code from more buggy code is not the presence of super-human programmers, but the presence of quality control, at all levels. A bug in released code may have been written by one person. But many other things need to go wrong for it it make it out into high-profile released code. In other words, the modern large software organization has processes that tolerate the existence of novice programmers and puts such reviews and tests in place to ensure that the output is of sufficient quality. I say “sufficient” not “perfect” because generally the consumer neither needs nor can afford perfect software.

So, although I’m fretting about the micro-details of the algorithm choice here, because I find that interesting in my own geeky way, that doesn’t mean that the best way to prevent such problems in the future is to make all novice programmers memorize Knuth. Sure, that would not be a bad solution, IMHO. But it is not a realistic solution and probably is not the most economical solution, in terms of the effort needed to achieve a given level of quality. Remember, you could book up on Knuth all you want, but then implement the algorithm incorrectly, or introduce a typo, or off-by one error, or fail to check in the right version of your code, or any of a dozen other mistakes that even more experienced programmers make. To prevent this kind of thing in practice you need a quality process, both at the person level and organizational level. It is part culture, part science. part process. So if I were sitting in Redmond, I’d avoid beating up on the programmer who made the coding error and instead ask questions like: 1) Where are the test cases for this ballot? 2) Who reviewed an signed off on the test cases? 3)Where is the evidence that the test cases were actually run and passed?

To all: Here is the other solution I can up with last night. Create an array of length 2N and put the browser choices in twice, like this: {0,1,2,3,4,0,1,2,3,4}. Then take a single random number from 0 to 4. Start from that index in the array, use 5 browser choices starting from there. So, if your random number was 3, you would use the sub-array {2,3,4,0,1}. This may be the fastest uniform solution out there, since it requires only one call to Math.random() and no exchanges. It returns a uniform distribution of positions. But it clearly is not random, since the relative positions are highly correlated.

>> Where serious money is on the line, such as with online gambling sites, random number generators and shuffling algorithms are audited, tested and subject to inspection. I suspect that the stakes involved in the browser market are no less significant.

Microsoft doesn’t need to play games creating situations they can later exploit through tiny innocent updates to IE+Windows; however, what one must wonder is if Microsoft is making enough money to give their dealings with the EU sufficient attention and resources. [Clearly someone in Q&A dropped the ball here.]

To address this potential deficiency, has the EU considered raising a tax on computer users, a tax which can be directed to Microsoft so that Microsoft has sufficient money to better effect what the EU asks of them?

If the EU authorities need ideas, may I suggest that they adopt Microsoft software for use in government in such a way that computer users are forced to buy Microsoft software in order to preserve interoperability? This way the “tax” on computer users can even be efficiently set and collected for Microsoft by Microsoft themselves without creating yet another layer of middlemen.

Could I further suggest that the EU mandate the use of OOXML?

Lastly, Microsoft is a major supporter of software patents. While it’s true that software patents, where possible within the EU, are likely subjected to a higher standard than they are in the US, the EU could try to ensure that software patents unambiguously become as permanent a part of EU law as we can expect of any law. Monopolies, especially those that can be bought in large numbers and which have wide scope by definition, provide yet another efficient way for taxes to be raised on all EU software users for the benefit of a company like Microsoft. You can’t get much cleverer than a tax on thinking and sharing. [All Microsoft has to do is to be the first to write down general ideas of where technology is almost surely to head and they will gain entitlement to 20 years of monopoly.] And we know open source developers like to think and share products that are becoming more popular by the day. Ha! Whoever thought they could bypass Microsoft taxes by creating their own software and sharing it for free was not thinking the morning they hatched out their plot.

Some of those less familiar with open source software now may have a(nother) reason for liking open source software: Even when one company slips up, open source code (javascript in this case) means that others can catch the mistakes and provide fixes. As a byproduct, if the reason for the screw-up was bad intentions, it comes out in the wash just the same.

Now, if we can only get Microsoft to open source, not just bits of javascript, but all the tens of millions of lines of code that constitute IE+Windows+MSOffice+MSservers+…, we’d be in business.. and a whole lot safer from malware and what not.