The permanence of the written word has fascinated mankind for millennia. The powerful knew the truth of this. To be sure that his deeds would outlive his contemporaries, the Emperor Augustus had his CV engraved in bronze in his “Res Gestae Divi Augusti” (Deeds accomplished of the Divine Augustus). The bronze did not survive, but the words have. Horace wrote in his Ode, “Exegi monumentum aere perennius” (I have erected a monument more lasting than brass). And his words have survived. Shakespeare in Sonnet #55 echoed this sentiment, “Not marble, nor the gilded monuments/ Of princes shall outlive this powerful rhyme”. Shelly in his Ozymandias shows the irony of the surviving boastful inscription, “Look on my Works ye Mighty, and despair!” beside the “colossal wreck” of an ancient monument.

The saying is “ars longa, vita brevis” — art is long, but life is short. But this is not entirely accurate. The performing arts such as dance or music have a very sketchy and imperfect history until the rather recent invention of written notations. So dance before around 1450 is a matter of speculation. No doubt the ancient Bacchae accompanied their ecstatic revels with an equally furious dance. But we know none of it. Thucidydes has the Lacedamonians march into battle to the accompaniment of flutes. What martial notes they played we do not know. We can only speculate, with Thomas Browne, “What song the Syrens sang”. Some like Benjamin Bagby may give a glimpse at earlier performance practice. And scholars like Milman Parry find echoes of ancient practices in traditional story telling. But we cannot know for certain.

The structural arts of architecture, city design, aqueducts, and monuments, engravings, these have all fared better over time. Even scattered texts from antiquity have survived. Text can have longevity, but not unassisted. Left to the ravages of water, fire, insects and fungi, papyrus, vellum and paper will only survive a few hundred years. For a text to survive longer, someone must copy it. So, the works of Cicero, these we have in rather good shape today, in part because Augustine of Hippo praised his works. (Then as now, getting a good review from a recognized figure is is the best marketing).

Which ancient texts were copied, and thus became part of the canon of western literature, was somewhat a matter of chance. Nine of the surviving plays of Euripides, existing in a single partial manuscript, are curiously in alphabetical order, but only containing plays beginning with the Greek letters eta through kappa, leading scholars to believe that this is merely volume 2 of a larger collection of plays that are lost. Euripides is believed to have written almost 100 plays. We have almost 20 of them today.

That said, the survival of a document does not depend entirely on the whims of monks or archivists. There are certain engineering principles which are key to creating a document that lends itself to long term retention. Some of these are tasks for the individual authors:

- Keep a document intact. Better to preserve a document inclusive of annexes and appendicies.

- Separation of content, structure, layout and presentation

- Findability — a good title, a abstract, keywords and other metadata will help ensure that your document can be found and retrieved via current and future search technologies.

- Use of a fully-specified, open document format.

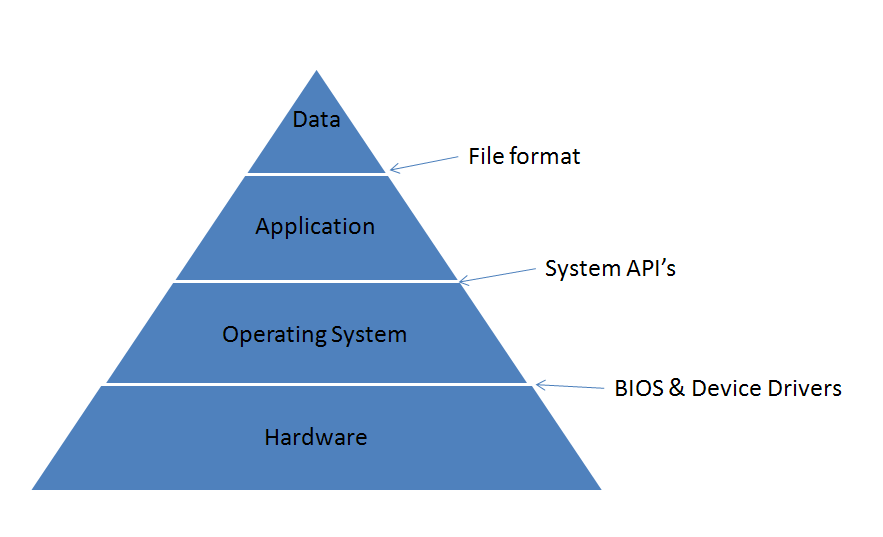

From another angle we can look at archiving from a systems view and follow a basic architectural principle. The key to durability, whether in documents, monuments, institutions, or whatever, all boils down to this: Do not depend on something less stable than yourself.

(I didn’t invent that principle, but don’t recall where I first heard it. Any idea who it was?)

If you depend on something less stable, which is to say more susceptible to change, than yourself, then when it changes, it forces you to change. Stability is when you change only when you want to change.

For example, a house is built on a foundation. A frame, plumbing and electrical, walls, wallpaper and furniture are layered on top. If replacing the wallpaper triggered a need for a new foundation, then we would say that the house was inherently unstable. But it is reasonable to expect that installing new plumbing will require opening a hole in a wall and later applying wallpaper. The expected rates of change of these various layers has lead to a method of construction that enforces this dependency chain. If for some reason we needed to make very frequent changes to the plumbing, then we would place them outside the interior walls, or behind removable wall panels for each access.

We carefully manage dependency chains when programming as well. For example, imagine a module A (a database client) that depends on a module B (a database server) where you believe that module B is less stable (has a greater rate of change) than A. This is a problem, since changes to B trigger changes to A. So we define a new interface layer C (maybe SQL) that is more stable than A or B. By having A depend on C rather than B directly, we transform the unstable dependency A->B, into the stable relationship (A,B)->C, where C is a standard.

This same principle applies to document formats as well. Never depend on something less stable than yourself. For the first few decades of document formats, the era of binary formats in the 1980’s and early 1990’s, we did this all wrong, as the following diagram shows:

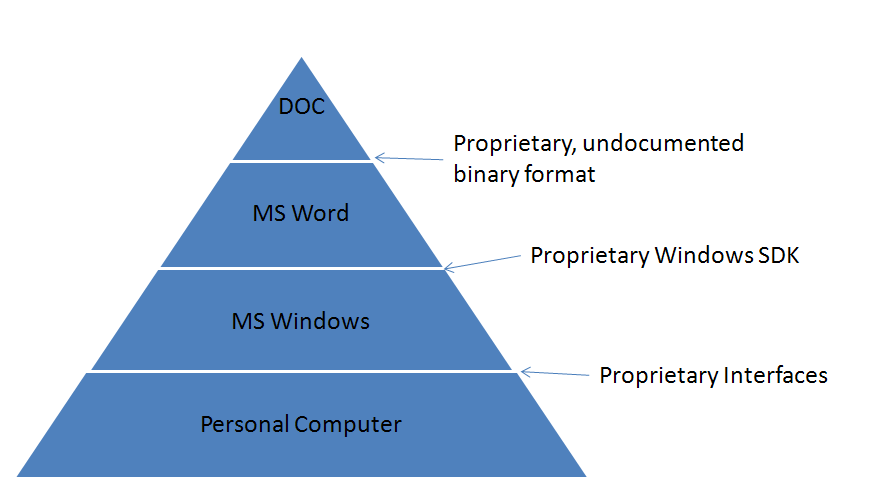

In those days the file format stood atop a large set of dependencies and changes at all layers would lead to changes in the file formats. This created a very inflexible stack of dependencies, where changes in the less stable lower layers can trigger incompatible changes to the document format. When we see that an Excel file on the Mac has a different internal date format than an Excel file created on Windows, we’re are seeing remnants of this kind of dependency chain.

Note also that these interfaces between the layers were not standards, but proprietary interfaces. For example, a Word 95 document might be seen as this:

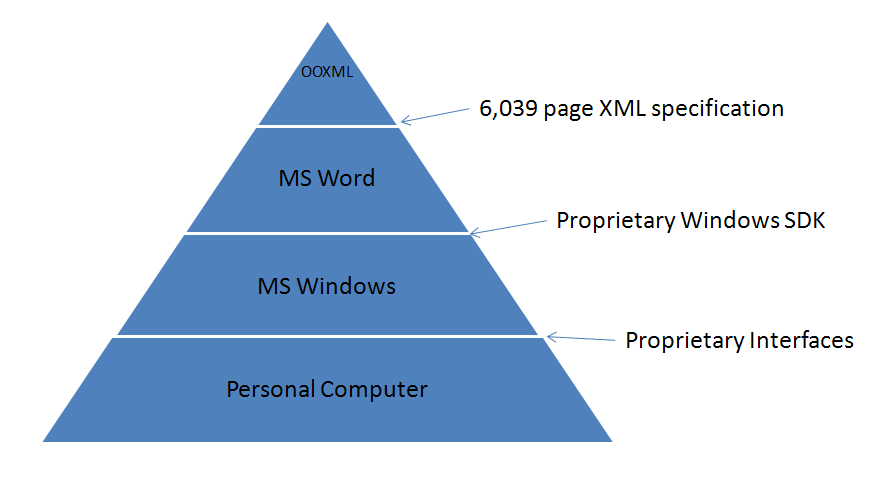

The move to XML-based file formats changes this diagram but little. The format at the top is now XML but the dependency chains are the same. The relationship of the format to the technology stack has not changed:

If using a new document format requires you to buy a new application suite, update your hardware and buy a new operating system, then that should be a clear sign that something is wrong. “The tail wags the dog,” as they say.

And note that a dependency is not the same as a layer. You can pretty things up all you want with the use of standards like XML, but still have adverse dependency chains. Taking a Microsoft Word binary format and translating it into XML, and putting it in a Technical Committee whose charter requires that it remain 100% compatible with Microsoft Word leaves you will a file format that depends on Microsoft Word, no matter now much XML Schema and Dublin Core you throw at it. The XML is just syntactic sugar. But the essence of the dependency chain remains: OOXML depends on Word and Windows, a single vendor’s application stack. Instead of an application supporting a format, a format is supporting an application.

I should further note that a vendor, at great expense and effort, can forestall the bad effects of an unstable dependency chain, sometimes for many years. Instability, with effort, can be managed, as jugglers, unicyclists and stilt walkers remind us. Even though the Word binary format has many dependencies on the Windows platform, and on specific internals of Word and features and behaviors from earlier versions of Word, Microsoft has managed to preserve some level of compatibility with these older formats, even in current versions of Word. The support is far from perfect, and it certainly makes their file format and their applications more complicated and more expensive to work with. But that is the burden they face from bad engineering decisions back in the early 1990’s. They and their customers live with that, and though they may not realize it, they all pay a price for it.

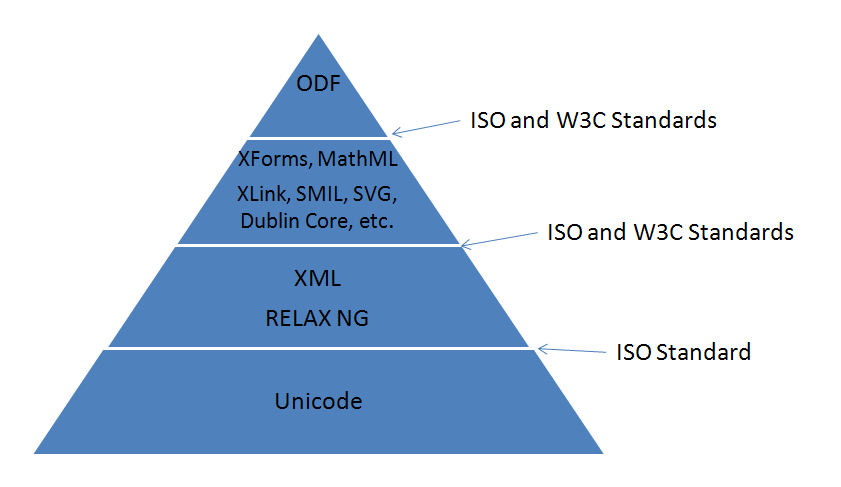

The alternate approach, the one that leads to better prospects for long term document access, is to have a stack, not of proprietary applications and interfaces, but of standards. ODF’s long-term stability and readability comes from the fact that it is built upon, and depends upon other standards that are widely-used, widely-adopted and widely-deployed. ODF is designed so the format depends on things more stable than itself, with a solid foundation as seen here:

The suitability of a format for long term archiving depends as much on the formal structure of the technological dependencies as it does on specific details of the technologies involved. The greatest technologies in the world, if assembled in an unstable dependency arrangement, will lead to an unstable system. Look at the details, certainly, but also step back and look at the big picture. What technology changes can render your documents obsolete? And who controls those technologies? And what economic incentives do they have to trigger a cascade of changes every 5 years, to force upgrades? As consumers and procurers we all need to make a decision as to whether we want to ride on that roller-coaster again.

The question we face today is whether we want to carry forward the mistakes of the past and the extensive and expensive logic required to maintain this inherently unstable duct tape and bailing wire Office format, or whether we move forward to an engineered format that takes into account the best practices in XML design, reuses existing international standards, and is built upon a framework of dependencies that ensures that the format is not hostage to a chain of technologies that can be manipulated by a single vendor for their sole commercial advantage.

The standard term for the concept of having different layers, with different velocity is,

shearing layers…How Buildings Learn is the classic book on the subject.

Document persistence is actually a serious issue in architecture, as CAD models aren’t shown to have the longevity yet -and the Airbus 380 disaster shows it applies to engineering systems too. It is claimed that Catia versions were the cause of incompatiblity between french and german parts of the airplane design.

Compared to that, doc files arent as serious. IF you want long-lived printable content, PDF is actually very good. XML formats make more sense if you want to have the option of repurposing the content over many years. So does plain text, funnily enough.

-steve

You compare very strangely different pyramids.

Actually you can construct the OOXML pyramid the same as the ODF examply you place there.

OOXML is also using unicode, XML and RelaxNG and using several dedicated markup languages like dublin core and others like DrawingML that are also open due to their inclusion in the OOXML format spec.

If plain text was all we needed to worry about, then I don’t think we would be having this discussion. Documents are becoming more and more dynamic. If the understanding of my document depended on an animation, and a vendors forced “upgrade” destroyed that animation, then the original message of my document has been lost forever.

Richard Chapman

@Richard,

If we were all creating plain text documents, then that would be all we would need to worry about for preservation. But if we create documents with graphics, even movies, then these may need to be preserved as well.

One approach would be to have something like ODF/A, an archival profile of ODF that restricted what type of embedded media could be used. For example, it may only allow open formats like PNG images, Ogg Vorbis for audio, etc.

Also, having a structured document, with clearly defined headers, footnotes, etc., defined by specific markup rather than merely placed conventionally in expected locations, is a necessity for the tools that allow screen readers and similar tools to read documents for the blind.

So one way or another, documents via markup rather than plain text is here to stay.

@Wraith,

These diagrams are illustrating the critical dependencies, not the traditional layered architectural diagram. Maybe directed arrows would work better than pyramids? But I think the approach I used better illustrates the idea that the technology at the base is the most stable, etc.

Although OOXML uses XML, Unicode, etc., this is certainly not a dependency for them. In particular, their use of bitmasks that map directly into Windows platform API’s, undocumented binary inclusions, even provisions for Unicode characters not permitted in XML, these all show that the XML is just a trivial wrapper layer, not a dependency or a constraint.

When you ask “Why did OOXML do something in a particular way?”, the answer is rarely, “Because it is good XML style to do so”. The answer more typically is, “Because that is how Word does it”. That is why I say that Word and Windows are the primary dependencies for OOXML.

@wraith

My first reaction was much like yours, that this was a somewhat confused apples vs. orange comparison. But upon reflection, there is an important kernel of truth in Rob’s argument. There is no denying that the design of the OOXML is based upon the older binary model. Microsoft’s fundamental claim for using OOXML is that is offers better integration with existing documents. This means that they have to design XML to imitate/integrate with/replicate the data structures in the older binary specifications. So Microsoft has to defend design decision make for a different media (binary) in their XML.

Even though they use XML, there are some pretty serious design mismatches. The most glaring one to me is using bitmasks in XML. The O’Reilly XSLT cookbook shows how you can handle bitmasks, but is is a kludge and you have to make assumtions about mapping bit arrays to numbers. Endian-ness (big vs. little) means that the XSLT will not be portable between computer architectures. If you can show me a better XSLT transform, I would be happy to retract this criticism. I do not want to hear about some non-XSLT tool that runs on .Net 3.0.

I also find it curious that you claim that including dedicated markup languages in the OOXML standard is somehow equivalent with using existing standards. Especially since your first example (Dublin Core) is not defined by the OOXML format spec, although it does appear that the standard does define what subset of the Dublin Core is used. Perhaps ‘dublin core’ refers to the subset of ‘Dublin Core’ that OOXML supports.

The reason that I want existing standards to be used is that I am lazy. Somebody else has already implemented tools to manipulate standard XML, so I can simply used debugged tools that I can often find with Google in a few minutes. So to me, the fact that OOXML defines some new dedicated markup languages is a pain the the backside, not a feature – I may have to write more code, especially if I’m not coding in .Net.